In short: Anthropic has agreed to access approximately 3.5 gigawatts of next-generation Google TPU compute capacity via Broadcom starting in 2027, marking its largest infrastructure commitment to date. The company has also revealed that its revenue run rate has now surpassed $30 billion, a significant increase from roughly $9 billion at the end of 2025.

Anthropic has made headlines by securing a major agreement to access multiple gigawatts of next-generation compute capacity through its collaboration with Google and Broadcom. This deal, which was announced on April 6, 2026, allows Anthropic to tap into about 3.5 gigawatts of Google tensor processing unit (TPU) capacity via Broadcom, beginning in 2027. This is a considerable expansion from the 1 gigawatt already provided to Anthropic in 2026.

Krishna Rao, the chief financial officer of Anthropic, emphasized the importance of this agreement, calling it "our most significant compute commitment to date." He noted that this deal continues the company’s disciplined strategy for scaling its infrastructure. The majority of the new compute capacity will be based in the United States, further extending Anthropic’s commitment made in November 2025 to invest $50 billion in AI computing infrastructure in the country.

Collaboration Between Three Key Players

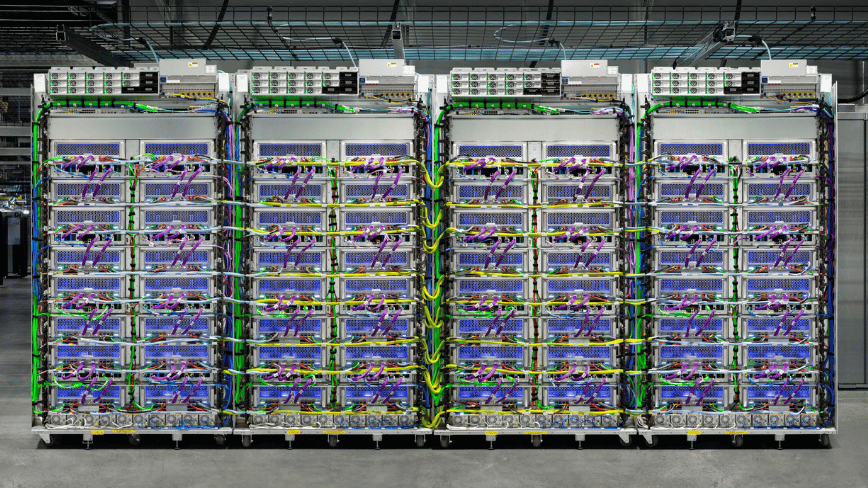

The announcement highlights the roles of all three parties involved—Anthropic, Google, and Broadcom. In this arrangement, Broadcom serves as the intermediary layer connecting Google’s custom silicon to Anthropic’s training and inference workloads. Concurrently, Broadcom has entered into a separate long-term agreement with Google to design and supply future generations of custom TPU chips, as well as a supply assurance agreement for networking components for Google’s next-generation AI data racks through 2031.

This positions Broadcom as a crucial component in the AI infrastructure landscape. The chipmaker, under the leadership of CEO Hock Tan, focuses on developing the silicon and interconnects that form the foundation for AI models rather than building the models themselves. Following the announcement, Broadcom shares rose approximately 3% in after-hours trading, indicating strong investor interest in companies that are positioned at the physical layer of the AI stack. Analysts from Mizuho, led by Vijay Rakesh, project that Broadcom could see $21 billion in AI revenue from Anthropic in 2026, which is expected to increase to $42 billion in 2027.

Broadcom first hinted at the scale of its relationship with Anthropic in September 2025 when Hock Tan revealed during an earnings call that a major customer had placed a $10 billion order for custom TPU racks. In December 2025, he confirmed that the customer was indeed Anthropic, which had placed an additional order worth $11 billion. The April 2026 deal marks the third phase of this evolving partnership, transitioning from a reported $21 billion commitment to a multi-gigawatt infrastructure plan with a clear delivery timeline.

Driving Revenue Growth

This compute deal is contextualized by Anthropic’s impressive revenue growth. The company reported that its run-rate revenue has now exceeded $30 billion, a significant jump from approximately $9 billion at the end of 2025. This remarkable growth trajectory—more than tripling in just a few months—can be attributed to an accelerating enterprise sales strategy that gained momentum after Anthropic successfully closed its Series G funding round on February 12, 2026. This funding round raised $30 billion, achieving a post-money valuation of $380 billion and was led by prominent investors including GIC and Coatue.

Upon closing the Series G round, Anthropic disclosed that it had over 500 business customers, each spending more than $1 million annually. As of the April announcement, this number has exceeded 1,000, doubling in less than two months. The rapid pace of enterprise adoption is the underlying factor driving the need for expanded compute capacity: increased revenue necessitates greater inference capacity, which in turn requires more training compute, leading to the need for more gigawatts.

Multi-Cloud Strategy

What sets Anthropic apart from many competitors is its explicit multi-vendor chip strategy. Its AI model, Claude, is trained and served across three hardware platforms: Amazon’s Trainium chips, Google’s TPUs, and Nvidia GPUs. Anthropic asserts that Claude is the only frontier model available across all three major cloud platforms: AWS, Google Cloud, and Microsoft Azure, providing both technical and commercial advantages.

This multi-vendor approach offers Anthropic resilience and negotiating power. In the event of capacity shortages on any platform, workloads can be shifted accordingly. If a chipmaker encounters supply chain disruptions or price fluctuations, Anthropic is insulated from the full impact of those challenges. This strategy mirrors Microsoft’s approach to mitigating single-vendor dependencies, although in Microsoft’s case, it is focused on partnerships rather than hardware suppliers.

The relationship with AWS remains foundational for Anthropic. In late 2024, Anthropic designated Amazon as its primary cloud and training partner, with total investment from Amazon reaching $8 billion. Anthropic's Project Rainier supercomputer cluster, which utilizes approximately 500,000 Amazon Trainium 2 chips in Indiana, is projected to scale beyond one million chips by the end of 2025. The new agreement with Google, now expanded through the Broadcom deal to a multi-gigawatt scale by 2027, complements rather than replaces the AWS partnership.

Commitment to US Infrastructure

The April 2026 agreement is explicitly framed as an extension of Anthropic’s November 2025 commitment to invest $50 billion in US-based AI computing infrastructure. This initiative, developed in collaboration with Fluidstack, focuses on establishing data center sites in Texas and New York, which are expected to come online through 2026. The new capacity from Broadcom, primarily based in the US, will further expand Anthropic's infrastructure footprint into 2027 and beyond.

This emphasis on domestic infrastructure aligns with the AI Action Plan set forth by the Trump administration, which has prioritized US-based compute capacity as a strategic goal. Anthropic, along with its competitors, has strategically positioned its infrastructure investments to reflect this alignment. Whether this reflects genuine strategic conviction or is a tactical response to regulatory pressures— or both—the outcome remains significant: a substantial portion of the world’s next-generation AI training capacity is being anchored within the United States.

Implications of the Compute Arms Race

The deal between Anthropic, Google, and Broadcom is part of a growing trend observed over the past 18 months. For instance, SoftBank’s $40 billion bridge loan to support its commitment to OpenAI reflects the same dynamics: AI laboratories have expanded so rapidly that their compute needs now exceed what can be funded through revenue alone, necessitating financial engineering akin to that of infrastructure utilities. Similarly, Meta’s $27 billion infrastructure deal with Nebius illustrates a comparable trend at the hyperscaler level.

This compute arms race is also influencing how AI companies manage their relationships with the services built on top of their models. Anthropic has been proactive in addressing these dynamics, recently restricting access to Claude through certain third-party frameworks. This decision highlights the financial pressures associated with frontier model inference, compelling AI labs to make challenging choices about which use cases to subsidize and which to price explicitly.

For Broadcom, the trajectory is simpler. Once not widely recognized in the AI context, Broadcom has emerged as a critical element of the infrastructure powering two of the most influential AI models in the industry—Google's Gemini and Anthropic's Claude. This position, solidified through the agreements with Google and Anthropic, marks a significant shift in the semiconductor industry landscape, as Broadcom becomes the preferred partner for custom silicon in hyperscale AI compute. While Nvidia remains a dominant player in AI accelerators, Broadcom's rise signifies a major transformation in the industry as it adapts to the evolving demands of AI technology.

Source: TNW | Anthropic News