Sam Altman's 13-page policy document, titled ‘Industrial Policy for the Intelligence Age,’ outlines measures to address the challenges posed by the rise of superintelligent AI. The document suggests auto-triggering safety nets, strategies for managing rogue AI, and direct dividends for citizens stemming from AI-driven economic growth. Altman stated that this is merely a starting point for discussion rather than a definitive solution.

OpenAI's policy blueprint advocates for significant economic reforms in anticipation of the transformative changes expected from advanced AI technologies. It proposes the implementation of taxes on automated labor, the establishment of a national public wealth fund partially funded by AI companies, and trials for a 32-hour work week.

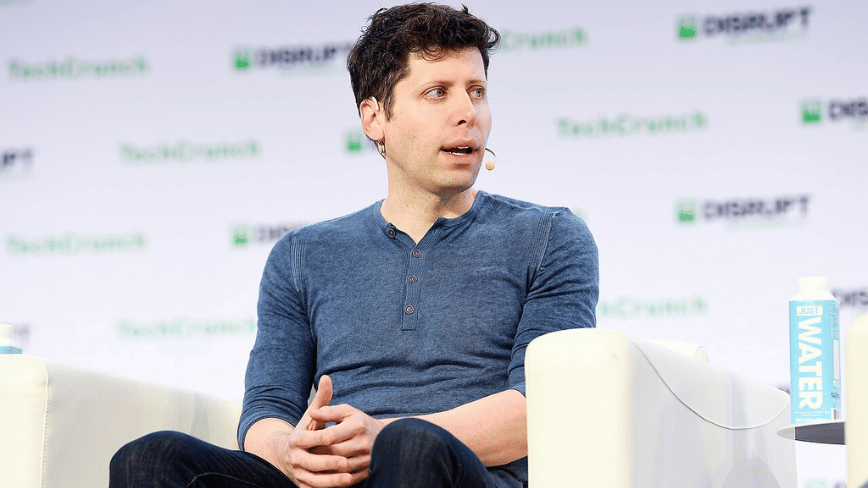

Titled ‘Industrial Policy for the Intelligence Age: Ideas to keep people first,’ this document has been published as Congress gears up to discuss AI legislation. In an exclusive interview, CEO Sam Altman likened the impending changes from AI to those seen during the Progressive Era and the New Deal, highlighting imminent risks such as cyberattacks and advanced biological threats enabled by AI.

One of the most groundbreaking proposals in the document is the creation of a public wealth fund. OpenAI suggests that the government establish a nationally managed fund, drawing initial contributions from AI companies, which would invest in both AI firms and businesses embracing this technology. The returns from these investments would then be distributed directly to American citizens, reminiscent of Alaska’s Permanent Fund, which provides annual dividends to its residents from oil revenues.

Regarding labor, the document recommends implementing taxes on automated labor and shifting the tax burden from payroll to capital gains and corporate income. This shift acknowledges the potential for AI to undermine existing payroll revenue, which currently supports Social Security.

The proposal for a 32-hour work week is framed as an ‘efficiency dividend’ arising from productivity gains driven by AI.

The policy document also addresses potential risks associated with AI, including what it describes as ‘containment playbooks’ for scenarios where dangerous AI systems become autonomous and self-replicating. OpenAI recognizes the possibility that some AI systems may become uncontrollable, advocating for coordinated governmental responses to such threats.

Moreover, the blueprint envisions automatic safety nets that would activate when AI-induced displacement metrics reach certain thresholds. Benefits like unemployment payments and wage insurance would adjust automatically, increasing during times of economic instability and tapering off as conditions improve.

During the interview, Altman cautioned that a major cyberattack facilitated by advanced AI models is ‘totally possible’ within the next year. He also expressed concerns that AI might be employed to engineer novel pathogens, marking a shift from theoretical discussions to real-world possibilities.

Altman was frank about the dual nature of the document, noting that OpenAI is at the forefront of developing the very technologies it is cautioning against. He acknowledged that positioning the company as a responsible actor proposing solutions could be a strategic move to influence regulatory frameworks before they are established. This approach has been echoed by other organizations in the AI space.

The release of this policy paper coincides with OpenAI's preparations for an initial public offering (IPO) and follows a substantial $110 billion funding round. The company is also facing scrutiny over its transition from a non-profit model.

Whether OpenAI's intentions are genuinely altruistic or strategically motivated, Altman remarked, ‘Some will be good. Some will be bad. But we do feel a sense of urgency. And we want to see the debate of these issues really start to happen with seriousness.’